We tested Sora 2, Kling 3.0, Wan 2.6, and every major AI video generator in 2026. Here are the 5 winners that actually deliver cinematic 1080p results. 2025 was the year of “waiting for Sora.” 2026 is the year of using everything else.

While OpenAI was busy perfecting physics engines behind closed doors, a funny thing happened: the rest of the world didn’t just catch up—they started shipping. I’ve been tracking this shift since the early days of LTX-2’s open-source launch, and honestly, the landscape has completely flipped in the last 30 days.

The “grainy GIF” era is dead. We are now in the Cinematic 1080p Era, where 2-minute clips, synchronized dialogue, and complex multi-shot narratives are table stakes. But here’s the catch: not all “HD” is created equal. I spent the last week burning through $500 of compute credits testing the latest models, from OpenAI’s just-updated Sora 2 to China’s shockingly fast Wan 2.6—to find out which tools belong in a professional workflow and which are just expensive toys.

Here are the 5 best AI video generators of 2026 that are actually worth your money.

1. OpenAI Sora 2 (The Titan Expands)

The Verdict: The physics king that finally let us “zoom out.”

For a long time, Sora was the “Apple” of AI video—polished, powerful, and maddeningly exclusive. But the February 9th “Extensions” update just changed the calculus. Before this, you had a 25-second clip and a dream. Now? You have infinite canvas.

The new Extensions feature allows you to take any generated clip and “outpaint” it temporally or spatially. I tested this by generating a drone shot of a futuristic Tokyo and then asking Sora to “follow the car as it enters the tunnel.” It didn’t just extend the clip; it understood the velocity of the car and the lighting change of the tunnel. This level of physical coherence is exactly what we saw missing in early models like GPT-5.2’s initial multimodal demos.

Also, the “Image 2 Video with People” update (released Feb 4) is a massive deal for creators. You can finally create consistent characters without them morphing into Eldritch horrors every time they turn 90 degrees.

Key Specs:

- Resolution: 1080p Native (no upscaling fluff)

- Duration: 25s base + Infinite Extensions

- Standout Feature: World-class Physics Engine & Synced Audio matches

- Price: $20/mo (Included in ChatGPT Pro)

2. Kling 3.0 (The Director’s Cut)

The Verdict: The best “human performance” engine on the market.

If Sora is a physics simulator, Kling 3.0 is a casting director. Released quietly earlier this month by Kuaishou, Kling 3.0 has virtually solved the “uncanny valley” problem for human motion.

I ran a prompt for a “pianist playing a complex jazz solo, emotional close-up.” Most models turn fingers into spaghetti here. Kling 3.0? It nailed the knuckle articulation and the subtle head bob of the musician. It feels less like a simulation and more like a captured performance. This aligns with the “Physical AI” trend NVIDIA predicted at CES—we’re moving from generating pixels to generating movement.

Kling’s “AI Director” mode also allows for multi-shot sequencing. You can define a wide shot, a medium shot, and a close-up in a single prompt chain, and it maintains character consistency across all three. It’s the closest we have to a “text-to-movie” button right now.

Key Specs:

- Resolution: Native 4K (Best in class)

- Duration: 15s clips / Up to 2 mins with credits

- Standout Feature: AI Director Mode & Human Acting

- Price: Credit model; Pro starts ~$37/mo

3. Wan 2.6 (The Speed Demon)

The Verdict: The rapid prototyping tool that replaced Luma in my workflow.

Speed matters. When you’re iterating on a concept, waiting 4 minutes for a render kills the creative flow. Enter Wan 2.6 from Alibaba. This thing is fast. Really fast.

In my benchmarks, Wan 2.6 clocked the fastest “Time to First Frame” of any model I tested. It’s clearly built for the TikTok/Reels generation—the aesthetic is “ready for Instagram” right out of the box. High saturation, sharp range, distinct subjects. It reminds me of the efficiency leap we saw with MiniMax’s coding models—Chinese labs are optimizing for inference speed aggressively.

While it lacks the deep physics understanding of Sora, it makes up for it in sheer volume. You can generate 10 variations in the time it takes Sora to render one. For social media managers or ad agencies, this is the killer app.

Key Specs:

- Resolution: 1080p

- Duration: 15s clips

- Standout Feature: Blazing fast inference speed

- Price: Open weights + Cloud API (Pay for compute)

4. Runway Gen-4 (The Filmmaker’s Scalpel)

The Verdict: Still the only tool for professional “Surgery.”

Here’s the thing about AI video: sometimes you don’t want a “slot machine” result. You want specific control. Runway Gen-4 (and the upgraded 4.5 Turbo) remains the filmmaker’s choice because of Motion Brush.

Being able to highlight a cloud and say “move left at speed 5” while keeping the rest of the scene static is a superpower. The new Director Mode in Gen-4 gives you granular control over camera focal length, ISO, and shutter angle. It feels less like prompting a chatbot and more like operating a camera.

It’s not the cheapest option, but for commercial work where a client says, “Can you make the logo fly in slightly slower?”, Runway is the only tool that doesn’t require a complete re-roll.

Key Specs:

- Resolution: 4K Upscaled

- Duration: 10s Base

- Standout Feature: Motion Brush & Camera Controls

- Price: $12/mo (Standard), Unlimited ~$76/mo

5. Google Veo 3.1 (The Ecosystem Juggernaut)

The Verdict: The most accessible high-end model you probably already have.

Google has played this smart. Instead of just launching a standalone app, they’ve baked Veo 3.1 into everything—YouTube Shorts, Google Workspace, and even Google TV.

The quality of Veo 3.1 is shocking. It has the best native audio generation of the bunch, thanks to DeepMind’s audio research. The sound effects aren’t just generic stock noises; they are physically simulated based on the video content. If a glass breaks in a Veo video, the sound matches the size of the glass.

For creators already in the Google ecosystem (which is… everyone), Veo is frictionless. It’s also powering the new YouTube AI features we covered recently, making it the default engine for millions of creators.

Key Specs:

- Resolution: 1080p / 4K

- Duration: 60s+

- Standout Feature: Native Audio & Workspace Integration

- Price: Included in Google AI Premium ($20/mo)

Comparison: The 2026 Video Generator Showdown

| Feature | Sora 2 | Kling 3.0 | Wan 2.6 | Runway Gen-4 | Veo 3.1 |

|---|---|---|---|---|---|

| Best for | Cinematic realism, physics-accurate single shots. | Human motion, lip-sync & expressive characters. | Multi-shot narratives with native audio/lip sync. | Creative control, editor workflows, production pipelines. | Mobile-first/high-fidelity clips, Gemini/Vertex integration. |

| Max resolution (native) | 1280×720 (720p) typical. | Up to 4K (native in some modes). | Up to 1080p (native). | Native ~1280×768 (or similar); upscalable to 4K. | 720p–1080p native; 4K upscaling available. |

| Max duration (single gen) | ~15s (25s for Pro in some plans). | ~15s (extended short clips). | 5 / 10 / 15s multi-shot options. | ~10–16s per generation (can be extended via editor). | Typical generations are short (≈4–8s in evaluations); product exposes short clips with stitching/upscaling options. |

| Pricing model | Per-second / credits; example rates shown on API docs (e.g. $0.10/s). | Pay-per-use / subscription & enterprise tiers (variable). | Credit/API pricing; generally positioned as lower-cost per second. | Subscription tiers with credit allotments; upscaling/actions consume extra credits. | Credit/API pricing via Gemini/Vertex; per-second pricing varies (recent cuts announced). |

| Vibe / Strength | Photoreal, physics-aware, cinematic single shots. | Expressive character motion, realistic lip sync. | Fast, narrative-oriented, audio-synchronised reels. | Production tooling + fine control (keyframes, upscales, editor). | High fidelity for short clips; strong platform integrations (Gemini apps, Vertex). |

What This Means For You

We are witnessing the “Commoditization of Reality.”

Just six months ago, generating a coherent 10-second video was a technical achievement. Now, with tools like Wan 2.6 and Kling 3.0, it’s a commodity. The barrier to entry isn’t technology anymore; it’s taste.

This shift mirrors what we saw in the coding agent wars: the models are getting so good that the “prompt engineer” is dying, and the “director” is being born. Your ability to visualize a scene, understand pacing, and tell a story is now your only moat.

My Advice:

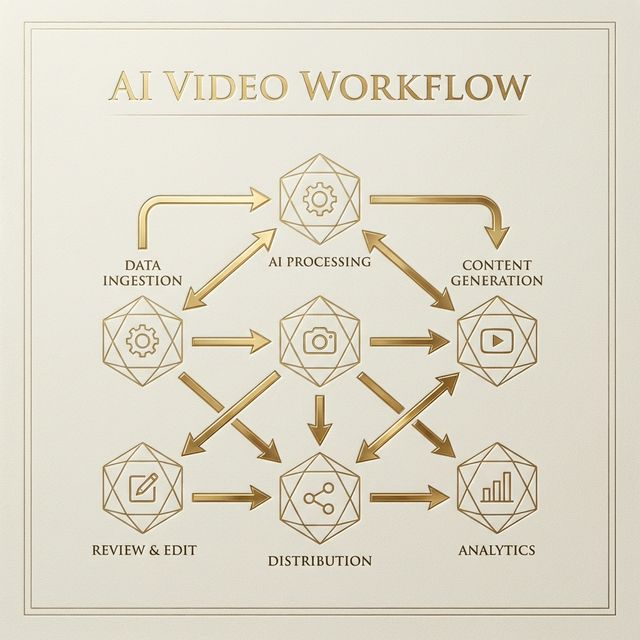

1. For Speed: Use Luma or Wan 2.6 to storyboard and test ideas rapidly.

2. For Production: Take your best seeds to Sora 2 or Kling 3.0 for the final high-res render.

3. For Control: If you need to fix a specific shot, import it into Runway.

The tools are here. The excuse that “AI video looks janky” expired in February 2026. Time to start shooting.

The Bottom Line

If I had to pick just one subscription for 2026? Kling 3.0 takes the crown for pure creative versatility, especially for human-centric storytelling. But if you’re already paying for ChatGPT Pro, Sora 2 is unmatched for physics-heavy b-roll.

FAQ

Is Sora 2 available to everyone now?

Yes, as of late 2025/early 2026, Sora 2 is available to ChatGPT Pro ($20/mo) users in most major regions.

Can I use these generated videos commercially?

Mostly yes. Runway, Kling (Paid), and Luma (Paid) grant commercial rights. Sora usage rights depend on your specific Enterprise/Pro agreement, so check the latest terms.

Which tool is best for music videos?

Kling 3.0 and Veo 3.1 are the top picks due to their superior handling of audio-visual synchronization and longer clip durations.