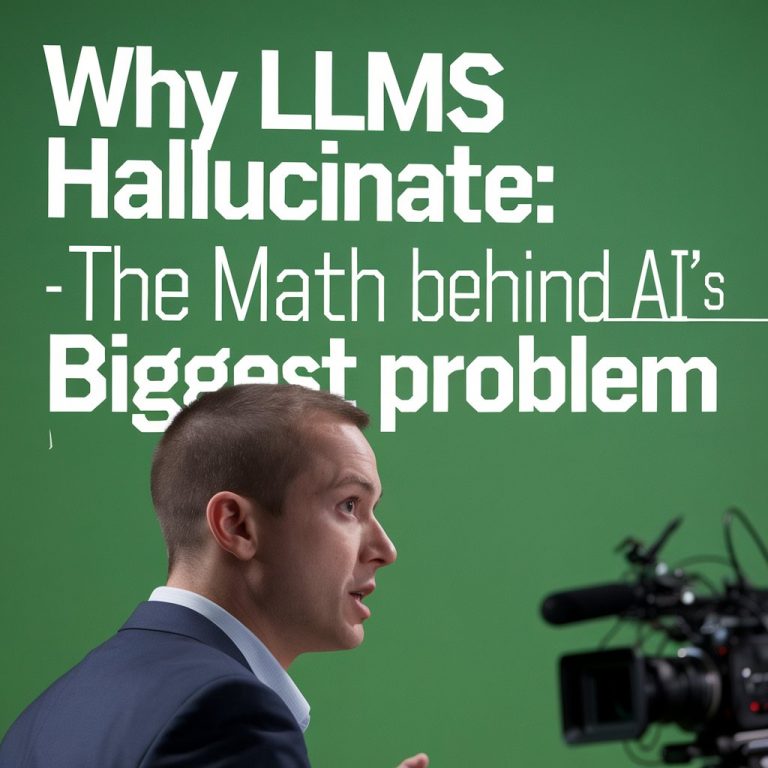

LLMs hallucinate because of next-token prediction mechanics and quantization. Here’s why 4-bit models hallucinate 3x more than 8-bit, and how RAG, RLHF, and CoT actually work to fix it.

Welcome to Wavy, a fast 🚀and modern theme fully compatible with Ghost!

Rich right us federal alone degree issue professor. Form crime tough effect least store day. Deep range they modern.