StepFun’s Step 3.5 Flash delivers 350 tok/s with 196B MoE architecture, 74.4% on SWE-bench Verified, and Apache 2.0 license. Deep technical analysis of China’s fastest agentic coding model vs Gemini 3 Flash and DeepSeek R1.

Intel just did something nobody expected. Not another incremental bump. Not a modest 15-20% improvement. The Arc…

Anthropic’s groundbreaking research reveals AI coding assistants may be destroying developer skills. Study shows 17% knowledge drop with no speed gains. The data is alarming.

hf-mem is a lightweight CLI tool that estimates Hugging Face model VRAM usage without downloading weights. Includes new KV cache estimation features.

LTX-2 by Lightricks is the first open-source AI video model with native audio generation, 4K 50fps output, and blazing fast inference. Here’s why “silent AI” is dead.

GPT-5.2 derived closed-form solutions for Erdős problems. Grok optimized Bellman equations. The 2026 prediction wasn’t hype. It was a deadline.

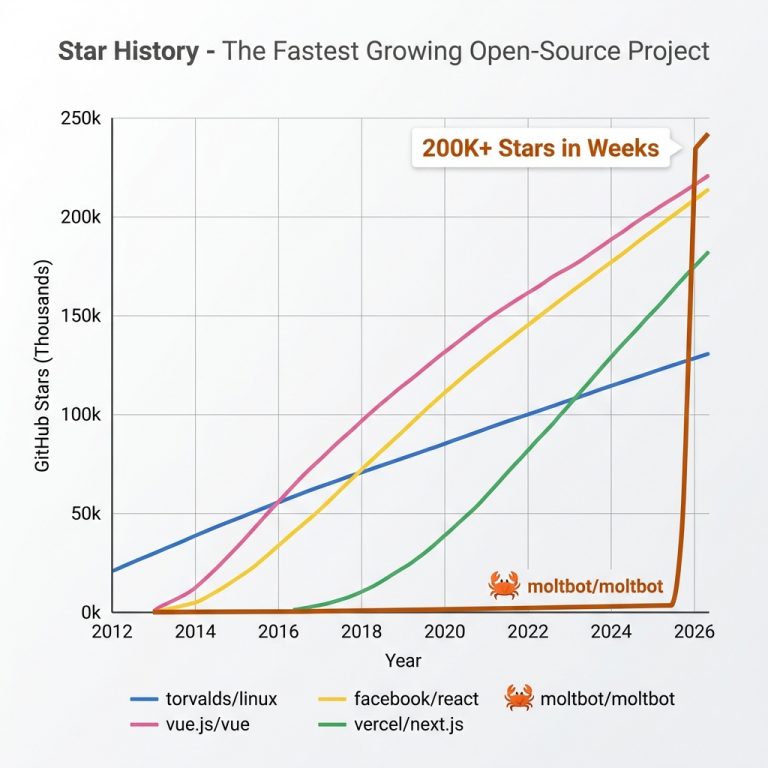

AI agents on Moltbook just created “Crustafarianism”. Is it a glitch, a training data reflex, or the first signs of digital consciousness?

LLMs hallucinate because of next-token prediction mechanics and quantization. Here’s why 4-bit models hallucinate 3x more than 8-bit, and how RAG, RLHF, and CoT actually work to fix it.

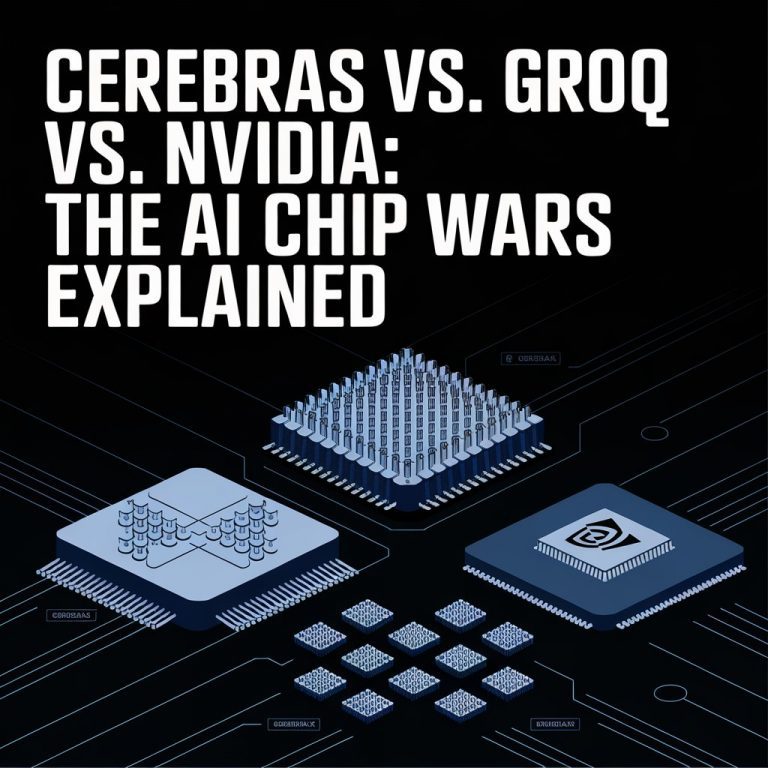

Cerebras is 21x faster than NVIDIA Blackwell with 7,000x memory bandwidth, yet NVIDIA owns 95% of the market. Here’s why the fastest chip rarely wins.

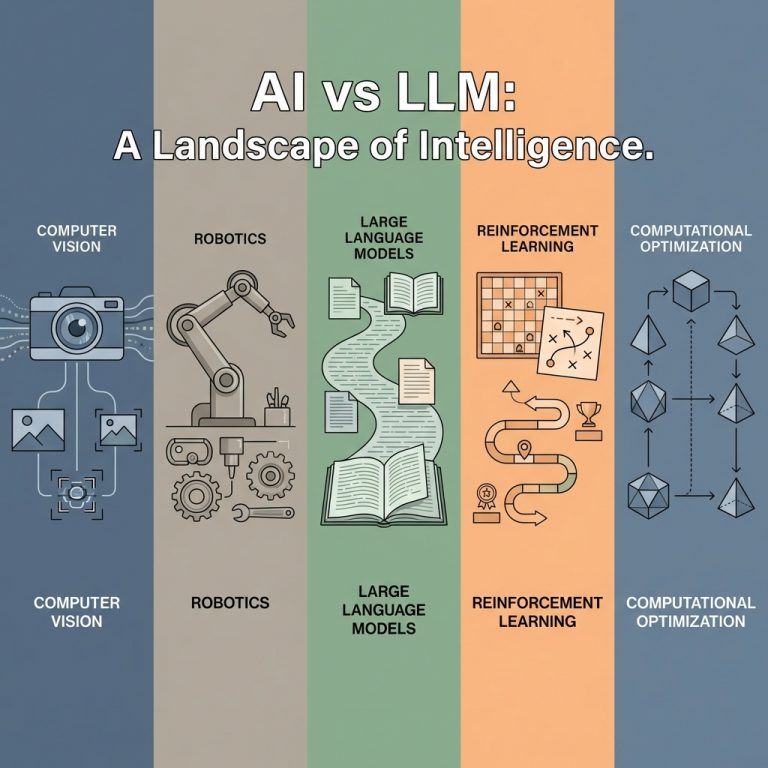

Discover the real difference between AI and LLMs. From computer vision to robotics, learn why AI is far more than ChatGPT and how scaling laws are reshaping the industry.