The internet is already flooded with reactions to Google DeepMind’s new Lyria 3 music generator. Depending on which side of the timeline you are reading, you will inevitably hear two narratives: either the music industry is terminally dead, or this is just another expensive parlor trick.

Yes, the model can generate three-minute, studio-quality 48kHz tracks. Yes, it understands when a verse should organically transition into a screaming guitar solo chorus. But if you’re just looking at the output duration—as the mainstream tech press is—you’re completely missing the structural earthquake.

While everyone marvels at how cleanly it handles vocal generation across eight different languages (complete with time-aligned lyrics), what caught my eye isn’t the runtime duration. It’s how Google finally, fundamentally killed the “audio drifting” problem.

If you’ve played with Suno V5 or Udio V4, you know exactly what I am talking about. The struggle is universal: an AI track starts out strong, establishing a solid rhythmic baseline and chord progression. But by minute two, the harmonic progression begins to wander like a drunk jazz pianist. The model “forgets” the key it started in. The rhythmic pocket gets loose. The song structure collapses into a statistical soup.

Lyria 3 fixes this catastrophic forgetting by abandoning traditional sequential generation in favor of latent diffusion on highly compressed temporal audio latents. And that physical shift in architecture changes the economics of audio generation entirely, creating a moat that open-source challengers are going to have a brutal time crossing.

The Coherence Engine: Why Latent Diffusion Beat Block Autoregression

To understand why Lyria 3 is a breakthrough, we first have to agree on a fundamental constraint: generating music is not like generating code, and it’s certainly not like generating text.

Text is a sequence of discrete, bite-sized tokens. You predict the next word, and as long as the logic holds across the current context window, the sentence makes sense. Music, however, is a high-dimensional, continuous waveform packed with extreme, unforgiving long-range dependencies. A minor chord played softly at the 0:15 mark needs to resolve properly at the 2:45 mark in order for the human brain to perceive the song as “real.”

The Mathematical Problem with Autoregression in Audio

Historically, the AI industry tried to solve music generation using the exact same hammer they used to build Large Language Models (LLMs): standard autoregression. This means laying down audio tokens sequentially, block by block.

The problem? Autoregessive models suffer from severe context degradation over long horizons. Just like we recently saw with Gemini 3.1 Pro’s bench maxing issues where the model would get stuck in infinite logic loops when executing long terminal commands, autoregressive audio models suffer a “context collapse.” By the time the model is rendering the second chorus, the mathematical weight of the initial intro riff has vanished from its working memory. It hallucinates a bridge that belongs in an entirely different song.

The Diffusion-Transformer (DiT) Shift

Google DeepMind realized that treating audio like text tokens is a hardware-inefficient trap. So, for the primary Lyria 3 Pro model, they completely threw out the autoregressive playbook and used Latent Diffusion Models (LDMs) paired with Diffusion-Transformers (DiTs).

To understand the difference, I like to use a physical analogy. Treat the autoregressive approach like laying down bricks for a driveway. You put one brick next to the other. If you make a slight angle error on brick 10, by the time you reach brick 1,000, your driveway is curving right into the neighbor’s living room. You can’t see the end of the driveway while you are laying the bricks.

Latent diffusion is like a sculptor carving a statue out of a massive block of marble. The model looks at the entire three-minute block of noise all at once. Instead of laying down audio chronologically, it iteratively chips away the noise across the entire timeline simultaneously.

By operating on highly downsampled, continuous latent representations (compressing the acoustic data into a latent rate as low as ~21.5Hz), Lyria 3 Pro can easily hold the entirety of a three-minute song inside its active memory window. It uses sophisticated cross-attention layers injected with text and timing embeddings (essentially marking exactly where “0:45” and “2:15” are on the timeline) to ensure that the verse and the chorus are mathematically aware of each other.

The model explicitly defines the intro, verse, chorus, and bridge in the latent space before a single microsecond of audible waveform is ever rendered to your speakers. This is why Lyria 3 doesn’t drift. It knows how the song ends before it even begins.

The RealTime Anomaly: Reverting the Architecture

But here is where the story gets incredibly interesting. While the tech press is focused on Lyria 3 Pro, Google quietly detailed an experimental variant called Lyria RealTime. And in a fascinating twist, Lyria RealTime actually does use block autoregression instead of latent diffusion.

Why would they regress to an architecture they just proved was inferior for structural consistency?

The answer is the universal constraint of computing: Latency. Latent diffusion is computationally massive. Because it has to look at the entire three-minute block simultaneously and iteratively denoise it over dozens of passes, you cannot stream it in real-time. You hit “generate,” and you have to wait for the GPUs to finish the entire track before you hear anything.

Google knew this was unacceptable for interactive applications—like dynamic video game scoring or live DJ tools. So they built Lyria RealTime. It dumps the global coherence of diffusion in favor of block autoregression, spitting out audio in raw 2-second chunks with sub-2-second latency. It enables infinite, steerable streaming where the user can change the genre mid-song.

What Google effectively did was build two entirely different mathematical architectures under the exact same “Lyria 3” brand name, acknowledging that you cannot currently cheat physics: you must choose between flawless temporal coherence (Diffusion) or infinite zero-latency streaming (Autoregression).

The Imperceptible Constraint: The Math of SynthID

Every discussion regarding generative media inevitably crashes headfirst into the safety and governance rails. Predictably, Google’s PR team proudly claims that every single millisecond generated by the Lyria 3 model is automatically embedded with “SynthID for Audio.”

Most tech reporters treat this as a boring corporate checkbox—a footnote at the bottom of the press release. I treat it as a massive adversarial challenge, and honestly, an engineering marvel. It is arguably the most important feature Google shipped with this release.

Watermarking audio is not new, but historically, watermarks have been pathetic. They relied on injecting metadata tags into the file header. If a malicious actor wanted to strip the watermark to pass off an AI song as a real Drake track, all they had to do was run the file through a free online MP3 converter to strip the metadata.

SynthID is fundamentally different. It is not metadata; it is physics holding hands with cryptology.

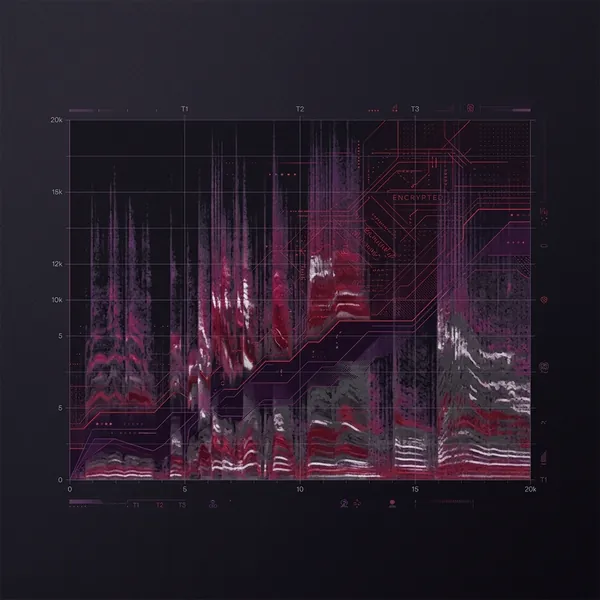

Spectrogram Amplitudes and In-Place Embedding

SynthID embeds its signature directly into the acoustic waveform. The algorithm takes the generated audio and mathematically transforms the raw waveform into a two-dimensional spectrogram—a visual map plotting time on the X-axis and frequency on the Y-axis.

Once the audio is represented as a spectrogram, the SynthID embedder injects a highly distributed, statistically correlated pattern directly into the visual representation of the sound wave. It subtly shifts the amplitude (volume) of specific, micro-sliced frequency bands.

Think of it like mixing a microscopic amount of ultraviolet dye directly into a bucket of blue paint. To the human eye (or human ear), the wall looks exactly the same. The listening quality is entirely unaffected. The watermark is physically imperceptible. But when you shine the right UV light—Google’s statistical detector—over the wall, a glowing, indisputable pattern emerges.

Surviving the Analog Hole

Because SynthID actually alters the structural frequencies of the music itself, it is essentially indestructible under standard adversarial environments. The fundamental frequency pattern is redundant and distributed across the entire timeline.

Google’s internal testing demonstrates that the SynthID signature remains aggressively machine-detectable even if an attacker actively tries to break it. You can push the track through brutal 64kbps MP3 compression, explicitly stripping out high-frequency data. You can artificially shift the core tempo up by 20%. You can inject white noise over the track.

And here is the true terror for malicious actors: the watermark survives the “Analog Hole.” You can literally play the Lyria 3 track out of a cheap laptop speaker, record it using an iPhone voice memo app in an echoing room, upload that dirty recording to YouTube… and Google’s detector will still ping it as AI-generated with statistical confidence.

To wash out the SynthID watermark, you would have to inflict so much aggressive band-pass filtering and spectral manipulation on the file that the actual music would be completely destroyed and unlistenable.

This isn’t just a win for copyright lawyers. This establishes the new gold standard for agentic AI governance. As the web becomes flooded with synthetic data, enterprise platforms will refuse to intake raw data without cryptographic origin physically proven at the spectrogram level.

The TPU Economics: Why Open-Source Will Struggle

While the architecture and safety measurements are fascinating, the physical deployment of Lyria 3 reveals Google’s true macroeconomic strategy. They aren’t trying to build the ultimate consumer toy; they are trying to dictate the entire enterprise orchestration layer by leveraging their colossal hardware moat.

If you are a solo developer trying to replicate something like Lyria 3 Pro using an open-source pipeline, you are immediately going to hit a wall entirely defined by VRAM (Video RAM).

In recent months, we’ve seen brilliant open-weight leaps like the Qwen3.5-122B-A10B architecture, which uses MoE (Mixture of Experts) routing to allow massive models to run on somewhat accessible hardware. LLMs are getting cheaper and lighter. But audio latent diffusion is moving in the exact opposite direction.

The $1.20/Hour Compute Requirement

Running high-fidelity, temporal cross-attention latent diffusion for three continuous minutes of 48kHz stereo audio requires an astronomical amount of active system memory. It requires constantly swapping massive multi-gigabyte tensors during the iterative denoising steps. It will instantly crash an Apple M-series Max chip or a standard desktop RTX 4090.

By isolating Lyria 3 inside the Gemini app, but more importantly, making it exclusively available to developers via Google AI Studio and Vertex AI, Google is essentially soft-locking the industry.

The inference backbone for Lyria relies entirely on Google’s Custom TPU v5e (Tensor Processing Unit) infrastructure. The TPU v5e is heavily optimized for XLA (Accelerated Linear Algebra) and the JAX matrix computations that underpin diffusion workloads.

Currently, on-demand TPU v5e pricing sits at roughly $1.20 per chip-hour. While cloud-scale commitments can drop that to ~$0.54 per chip-hour, the reality is stark. For a startup to build a competitor to Lyria 3 from scratch, they don’t just need world-class math PhDs; they need to lease entire interconnected pods of TPU v5e instances just to handle the experimental training loops, before even thinking about the devastating server costs of hosting a production endpoint where millions of users are generating 3-minute songs.

Google doesn’t have to worry about an open-source project matching Lyria 3’s quality next week, because the open-source community literally cannot afford the electricity and silicon required to run the inference at scale. This allows Google to securely position Lyria 3 as an elite, premium enterprise API.

The Market Impact: Overhauling Multimodal Generation

So who actually needs a $1.20/hour TPU-backed audio generator? Why would the enterprise care?

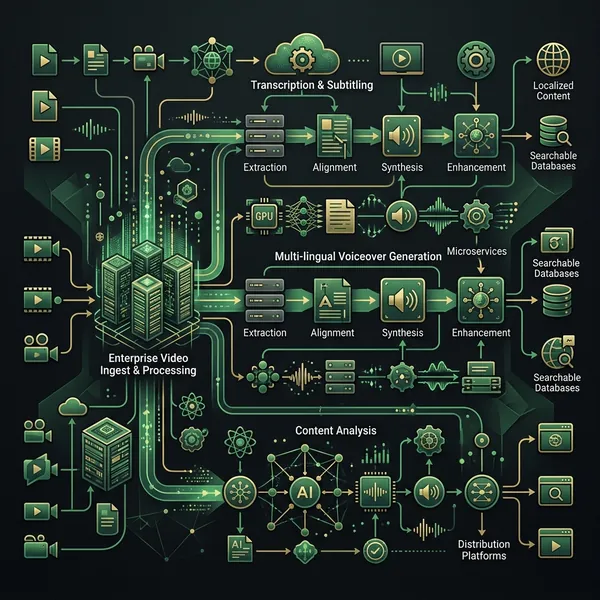

The answer lies in multimodal orchestration. Right now, AI video generation is undergoing a massive renaissance. Models like Sora, Veo, and the incredibly fast Helios 14B are creating stunning visual fidelity. But they all possess a crippling weakness: they are silent.

If you are a marketing firm or a localized ad agency leveraging AI to generate hundreds of iterative video commercials a day, your bottleneck is sound design. You currently have to take your silent, AI-generated video and manually dump it into a timeline editor. You then have to hunt for royalty-free stock music, manually cut it on the beat, fade out the volume when the voice-over starts, and export it back out. That human-in-the-loop requirement breaks the automated scaling advantage.

Lyria 3 natively accepts video inputs. The cross-attention mechanisms we discussed earlier aren’t just looking at text prompts; they are interpreting visual motion.

The enterprise pipeline for Lyria 3 looks like this: An autonomous agentic system orchestrated by tools like OpenClaw or Claude Co-Work dictates a script. That script is sent to an AI video generator. The silent visual MP4 is immediately passed via API directly into Vertex AI and handed to Lyria 3.

Lyria mathematically analyzes the pacing, lighting, and movement within the video. It generates an entirely original, imperceptibly watermarked, 48kHz soundtrack where the drum hits literally trigger perfectly in sync with the visual cuts in the video, while generating a completely localized Spanish vocal track based on a provided text file. It then returns the fully multiplexed, final video back to the publisher.

No humans. No Adobe Premiere timelines. No royalty negotiations.

The Bottom Line

Lyria 3 is impressive not because it “sounds good.” Lots of AI models sound good for 15 seconds. It is a genuine breakthrough because DeepMind explicitly solved the long-form audio drifting problem by forcibly pivoting to hardware-taxing latent diffusion, utilizing entire TPU clusters to mandate structural coherence.

Simultaneously, they aggressively solved the data provenance nightmare by turning the analog physics of sound against itself, burying the SynthID cryptographic signature so deeply in the spectrogram that destroying the watermark guarantees the destruction of the audio file itself.

Google just set the absolute baseline for professional, enterprise-grade AI audio. The cost of entry into this specific generative arena is no longer measured in how well you can scrape data; it is measured in the sheer brutal capacity of your hardware orchestration layer.

If your startup is still wrapping a front-end API around an open-source sequential loop generator, you are officially building obsolete technology.

FAQ

Does Lyria 3 support stem separation out of the box?

No. Unlike competitors such as Suno V5 which boast independent vocal and instrumental tracking, Lyria 3 currently outputs a flattened, mixed 48kHz stereo file. You still need secondary foundational tools to isolate vocals and instrumentals if you intend to perform professional, multi-track studio mastering.

Can I strip the SynthID watermark by drastically changing the pitch or tempo?

No. SynthID is embedded comprehensively across a wide frequency spectrum algorithm. Mathematically shifting the pitch or adjusting the tempo ratio does not break the relative positioning of the watermark’s amplitude adjustments, allowing Google’s statistical detector to still positively identify the spectral arrangement.

How much local GPU VRAM do I need to run Lyria 3?

You cannot run Lyria 3 locally. The model is deeply closed-source and computationally massive, restricting it exclusively to Google’s TPU v5e-backed infrastructural APIs (Vertex AI and Google AI Studio) and the consumer-facing Gemini application backend.

What is the difference between Lyria 3 Pro and Lyria RealTime?

Lyria 3 Pro utilizes Latent Diffusion to look at the entire song structure simultaneously, providing extreme temporal coherence over a three-minute track at the cost of high rendering latency. Lyria RealTime sacrifices global structure by using block autoregression, allowing it to output audio in raw two-second chunks for sub-2-second zero-latency streaming.