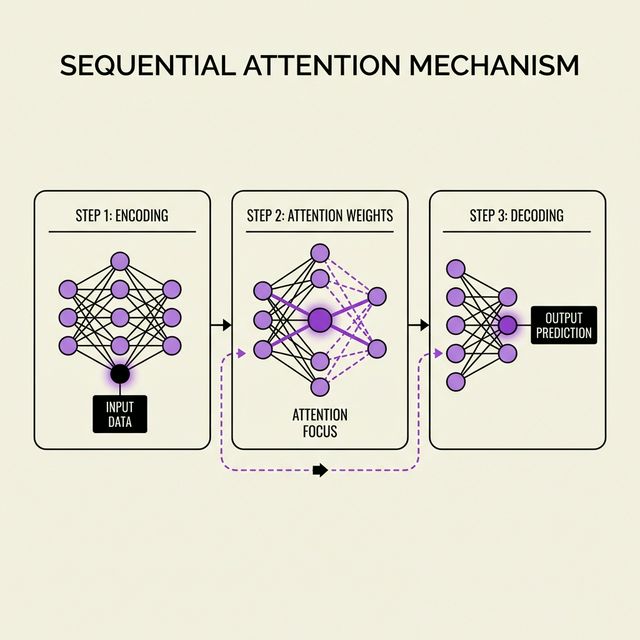

Google Research dropped Sequential Attention on Feb 4, 2026 with zero fanfare. This NP-hard solver is pruning LLMs, optimizing features, and making AI 10x leaner without accuracy loss.

Imagine an artificial intelligence (AI) that doesn’t just execute commands but actively teaches itself to solve complex…

In today’s fast-evolving AI landscape, innovative prompting techniques are reshaping how Large Language Models (LLMs) think and…