LLMs hallucinate because of next-token prediction mechanics and quantization. Here’s why 4-bit models hallucinate 3x more than 8-bit, and how RAG, RLHF, and CoT actually work to fix it.

DeepSeek-R2 is expected in early February 2026 with revolutionary reasoning upgrades. Here’s what we know about the next-gen model that could reshape the AI landscape.

OpenAI officially retires GPT-4o on February 13, 2026. Here’s why the transition to GPT-5.2 marks a turning point for AI and what it means for you.

OpenRouter data shows Gemini 3 Flash apps processing 236B tokens vs Haiku’s 63B. We analyze the 4x volume gap, the $0.50 pricing tier shift, and the failure of Anthropic’s “Haiku” strategy.

If 2025 was the year of “Fast AI”—characterized by the race for lower latency, Groq-style inference chips,…

We’ve all been there: staring at a “cutting-edge” AI model that chokes on basic instructions the moment…

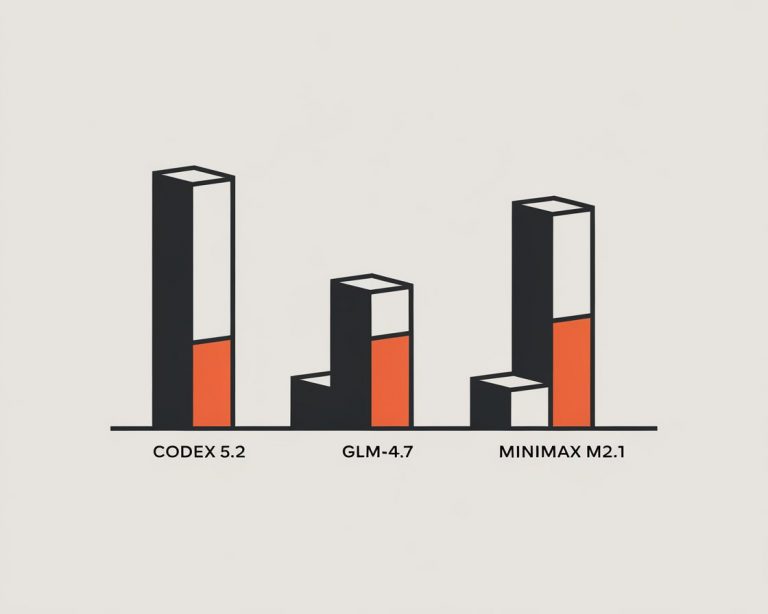

For the last three years, the “best coding model” debate was a polite game of tennis between…

The race for local AI dominance just got a new speed demon. While the world was busy…

DeepSeek’s revolutionary image-based context compression can reduce LLM tokens by 90%. Here’s how to implement it yourself in 30 minutes and save thousands on API costs.

Meta is abandoning open-source with “Mango” and “Avocado” — two proprietary AI models targeting video generation and coding. From the Llama 4 stumble to the nuclear-powered Prometheus supercluster, here’s why Zuckerberg is going closed.