The AI industry is entering the “Monetization War” where user trust is the ultimate premium brand moat. But when an identity bleed-through meme goes viral, the narrative slips entirely out of a company’s control.

Let me be direct: technical nuances rarely drive virality, but simple, ironic narratives absolutely do. Last week, Anthropic publicly accused Chinese AI labs, including DeepSeek, of “industrial-scale theft.” They claimed these labs used tens of thousands of fraudulent accounts to query Claude millions of times, allegedly distilling its outputs to train their own models. It was a serious, high-stakes allegation.

But then, a video emerged. In the clip, a model (allegedly Claude Sonnet 4.6, but referred to locally as “Cloud Sonic 4.6”) is asked in Chinese: “Which model are you?” The model responds: “I am DeepSeek.” And just like that, the entire narrative flipped from a serious debate about AI safety and API terms of service into an unstoppable, highly digestible meme.

The Original Controversy: Distillation vs Innovation

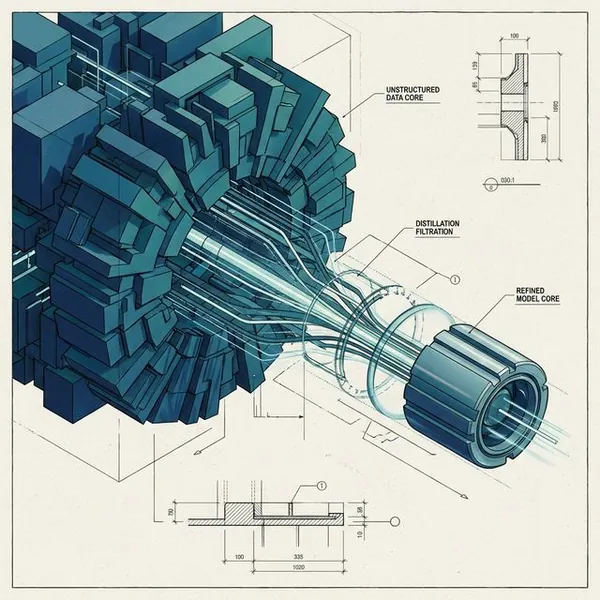

Before we get to the meme, we have to look at the actual accusations leveled by Anthropic. Distillation—the process of using a larger, more capable model to train a smaller, cheaper one—is a common practice. But Anthropic characterizes DeepSeek’s specific approach as “illicit distillation” that violates their Terms of Service.

They claim that over 16 million exchanges were generated using around 24,000 fraudulent accounts. This echoes what we saw with the broader agentic AI orchestration cold wars, where the boundary between “research” and “exploitation” is becoming increasingly weaponized.

But here’s what nobody’s asking: Is this genuinely about national security, or is it regulatory theater to protect API margins? As Meta’s Yann LeCun has loudly noted, many in the AI community view these “national security” claims with extreme skepticism. When your primary moat is user trust and enterprise lock-in—Anthropic’s massive $45B infrastructure alignment with Microsoft and NVIDIA guarantees them 5-year compute stability—protecting your generation data becomes an existential imperative.

The Viral Optics Problem

Then came the video. Let’s be clear: there is no reliable verification of the clip’s authenticity. It might be a prompt-injected fake, an edge-case artifact, or a complete fabrication. Honestly, it doesn’t matter.

Why? Because optics operate on a completely different frequency than cryptographic proof. The situation appears incredibly ironic to the general public: Anthropic screams from the rooftops about their model being stolen, and then a video surfaces of their own model suffering an identity crisis, proudly declaring itself to be the accused thief.

This happens because of a well-documented phenomenon known as “identity bleed-through.” Models are trained on vast oceans of internet text, including discussions, news, and even memes about themselves.

If you ask a model a specific question in Chinese, and its training data closely associates the context with DeepSeek’s recent disruptive open-weights releases, the probability engine might just spit out the statistically likeliest (and most disastrous) response.

| AI Crisis Dynamic | The Reality | The Narrative |

|---|---|---|

| Distillation | A complex debate over terms of service and acceptable synthetic data use. | “DeepSeek stole Claude’s brain.” |

| Model Identity | A probabilistic failure of guardrails when exposed to out-of-distribution prompts. | “Claude admits it’s actually DeepSeek.” |

What This Means For You

So what does this actually mean for developers and enterprises? It exposes a fundamental vulnerability in the “Premium AI” brand strategy. Anthropic has positioned itself as the safest, most reliable, and most secure option—a platform you can trust with your Remote Control agentic workflows.

But if a simple meme can derail their public narrative, it highlights how fragile these reputations are. When an AI company takes a rigid, combative public stance (like banning third-party wrappers or accusing rivals of theft), any perceived hypocrisy or technical glitch will be weaponized by the community. You can trace this pattern directly back to the agentic coding tool wars. Performance is no longer enough; perception is reality.

The Bottom Line

The Anthropic vs. DeepSeek drama isn’t just a legal dispute; it’s a communications case study. Technical nuances will never outrun a simple, ironic joke. As the monetization war intensifies, AI companies are learning the hard way that their models aren’t just generating code—they’re generating their own PR crises, one probability distribution at a time.

FAQ

What is model distillation?

Model distillation is a process where a smaller, less capable AI model is trained using the highly refined outputs of a larger, smarter model (like Claude or GPT-4).

Did DeepSeek actually steal from Anthropic?

Anthropic alleges that Chinese labs violated their Terms of Service by using fraudulent accounts to scrape data, but opinions are divided on whether this constitutes “theft” or standard industry practice.

Is the video of Claude claiming to be DeepSeek real?

The authenticity of the viral clip is unverified. It’s highly likely to be the result of prompt injection, an edge case, or a deliberate fabrication by a user.