On March 27, 2026, the AI industry experienced a fundamental narrative shift, and it wasn’t from a staged keynote presentation. A misconfigured content management system at Anthropic left nearly 3,000 unpublished internal assets visible to the public. Discovered by independent security researchers, the unencrypted data store contained draft blog posts, PDFs, and internal memos.

The centerpiece? A new, unreleased model internally codenamed “Claude Mythos.”

This isn’t just another incremental update. Anthropic’s internal documentation describes Mythos as “far ahead of any other AI model in cyber capabilities.” And that phrase alone was enough to send a chill through the broader tech market.

The Unsecured Data Store

If you look closely at how the leak happened, it reveals a fascinating paradox in modern AI development. We are building systems capable of passing advanced reasoning benchmarks, yet the primary failure mode remains simple human error.

How The Cache Was Exposed

The leak wasn’t a sophisticated nation-state extraction. It was a default configuration error. Files uploaded to Anthropic’s CMS were reportedly set to be publicly accessible unless manually toggled to private by the publisher.

This mirrors what we saw earlier this year with Gemini 3.1 Pro is “Bench Maxing”, where raw intelligence doesn’t automatically translate to operational security. The irony here is palpable: the documents warning about a model capable of zero-day exploitation were exposed via a zero-day configuration oversight.

Figure 1: The CMS misconfiguration left over 3,000 internal documents exposed without basic authentication.

The Capybara Tier

To understand why Mythos matters, we have to look at Anthropic’s product hierarchy. Traditionally, they’ve operated on a three-tier system: Haiku, Sonnet, and Opus.

Claude Mythos introduces a fourth tier, internally referred to as Capybara. But what does a fourth tier actually mean when Claude Sonnet 4.6 is already an agentic workhorse?

The Mechanics of the 4th Tier

This isn’t about writing better poetry or faster React components. Capybara appears to be structurally optimized for offensive and defensive systems analysis. The leaked documents suggest it can discover and exploit software vulnerabilities at a speed that outstrips current human defensive efforts.

Think of it this way. A standard model reads code and suggests refactoring. A Capybara-tier model reads an entire repository’s architecture, maps the dependencies, identifies the race conditions, and generates the explicit exploit vector—autonomously. It’s the difference between a code reviewer and a weaponized auditor.

Figure 2: The introduction of a fourth tier marks a shift from general-purpose assistants to specialized system analysts.

Market Shockwave

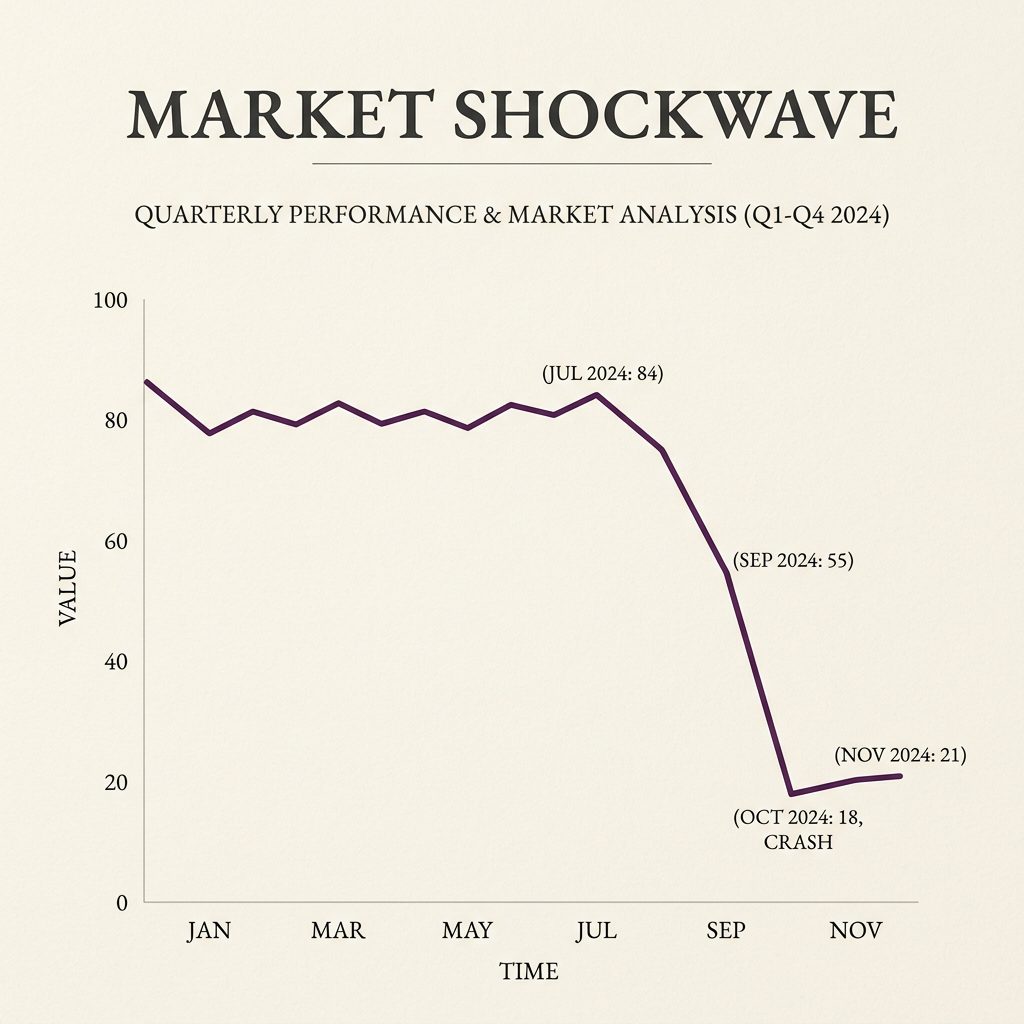

The economic reaction to the Claude Mythos leak was immediate and visceral.

When the news broke on Friday, March 27, major cybersecurity stocks plummeted. CrowdStrike dropped nearly 7%, while Palo Alto Networks and Zscaler saw similar declines. Why the sudden panic?

The Commoditization of Defense

Investors realize that a model capable of finding and exploiting vulnerabilities at machine speed could fundamentally disrupt the business models of traditional cybersecurity firms. If Anthropic provides early access to cyber-defense organizations (as the documents suggest), it turns offensive AI into a defensive shield.

This echoes the dynamic we saw when Anthropic Co-Work Update: The Real Enterprise OS launched. Enterprise buyers aren’t looking for a dashboard anymore; they are looking for an autonomous agent that fixes the problem before the alert even fires. If Claude Mythos can do that natively, the entire Endpoint Detection and Response (EDR) market faces an existential valuation crisis.

Figure 3: Cybersecurity stocks experienced a sharp intraday sell-off following the leak’s confirmation.

What This Means For You

So what does this actually mean for developers and security analysts?

First, don’t expect to use Claude Mythos in your web browser next week. Anthropic is restricting the model to a select group of “early access customers” primarily focused on defensive hardening. They are using the model to patch vulnerabilities before the broader public gets their hands on it.

Second, the era of the “General Purpose” LLM is fracturing. We are moving into a phase where specific tiers of models will be gated behind significant regulatory and security clearances.

The Bottom Line

The Claude Mythos leak wasn’t just a PR nightmare; it was a preview of the next arms race. The barrier to entry for discovering zero-day vulnerabilities is dropping to zero. The only defense against an AI operating at machine speed is another AI operating at machine speed.

FAQ

What is Claude Mythos?

Claude Mythos (internally codenamed Capybara) is an unreleased, next-generation AI model from Anthropic that represents a new fourth tier, positioned significantly above Claude Opus 4.6.

Why did cybersecurity stocks drop?

Leaked internal documents revealed that Claude Mythos is highly capable of autonomously discovering and exploiting software vulnerabilities, causing investors to panic over the potential disruption to traditional security vendors.

Is Claude Mythos available to the public?

No. Training is complete, but the model is currently restricted to a tight circle of early-access customers, primarily for defensive cybersecurity hardening.